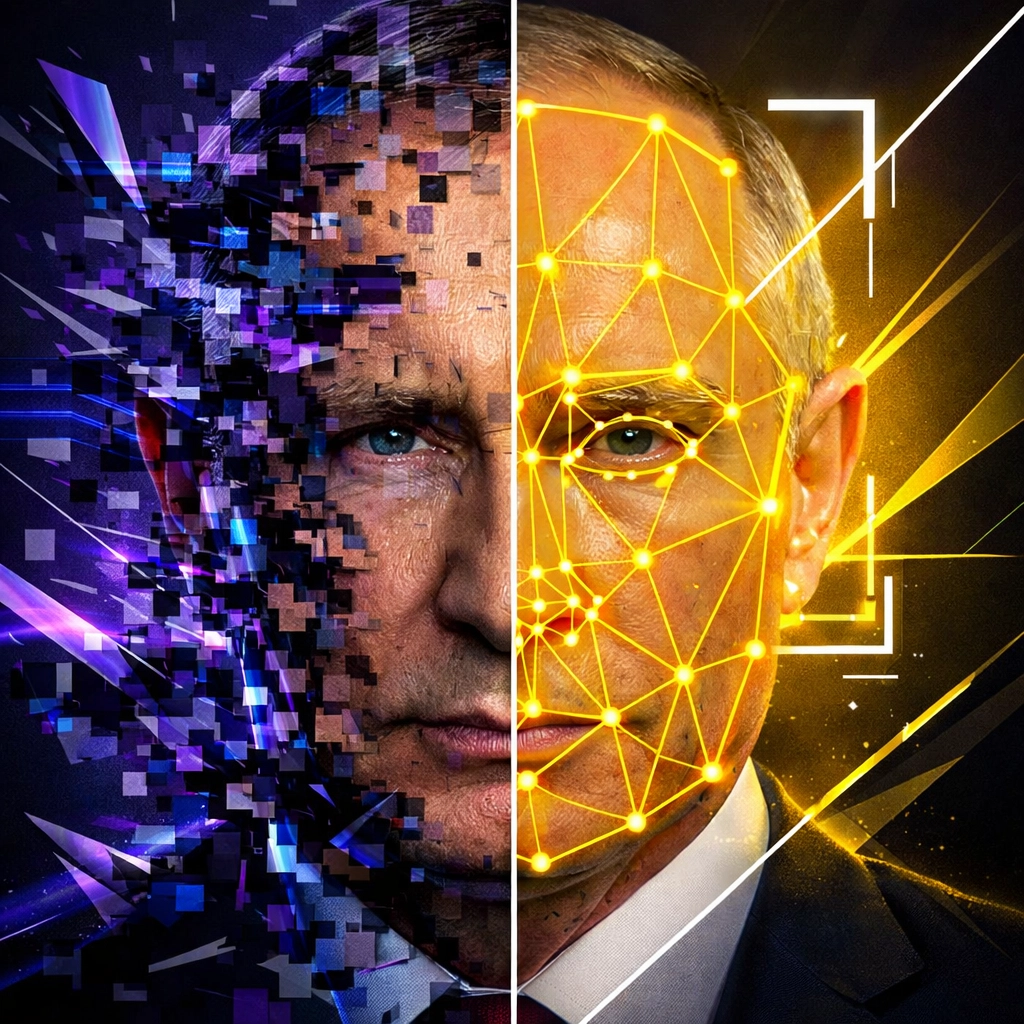

A video surfaces three days before the election.

Your candidate. Saying something they never said. Doing something they never did.

It's everywhere. Twitter. Facebook. WhatsApp groups. Local news stations are calling for comment.

You have hours: not days: to respond.

This isn't a hypothetical anymore.

Welcome to 2026, where deepfakes aren't just a tech buzzword. They're a weapon. And your campaign is the target.

The Liar's Dividend Is Already Cashing Out

Here's the terrifying part: deepfakes create a dual-layer attack on your campaign.

Layer one: synthetic videos and audio recordings that fabricate statements, scandals, or endorsements you never made.

Layer two: the "liar's dividend": where real footage gets dismissed as fake. Where truth becomes negotiable. Where your actual statements can be waved away by opponents claiming "it's obviously doctored."

Recent casualties?

A deepfake video of Rep. Alexandria Ocasio-Cortez fooled major news commentators. Deepfake audio of Secretary of State Marco Rubio deceived five government officials and three foreign ministers. Russia-linked actors. Sophisticated execution.

And that was just the warm-up.

By 2026, synthetic impersonation isn't a novelty threat. It's mainstream campaign warfare.

China. Russia. Iran. Domestic bad actors. They're all exploiting these tools to erode trust in political leaders, shift public opinion, and create chaos during critical election windows.

Your opponent might not even be the one deploying them.

But you'll be the one dealing with the fallout.

Why Your Current Strategy Won't Cut It

Most campaigns approach digital security like it's 2016.

A social media manager. A press secretary. Maybe a crisis comms consultant on speed dial.

That's not a defense strategy. That's a Band-Aid on a bullet wound.

Here's what fails:

Traditional fact-checking is too slow. By the time reputable outlets debunk a deepfake, it's already been shared 50,000 times.

Legal action is reactive. You can threaten lawsuits, but the damage happens before your attorney even drafts the cease-and-desist.

Platform reporting is inconsistent. Facebook might take down the video. Twitter might not. Telegram definitely won't.

And hoping "the truth will come out"?

That's not a strategy. That's a prayer.

The AI-First Defense Framework

An AI-first campaign strategy isn't about using AI to create content (though that's part of it).

It's about building an intelligent defense infrastructure that detects, responds, and neutralizes threats in real-time.

Think of it as your campaign's immune system.

Detection Layer: Eyes Everywhere

Deploy AI-based monitoring tools that scan social platforms, news sites, and messaging apps 24/7. Microsoft's Video Authenticator. Meta's deepfake detection technologies. Custom solutions that analyze pixel variability, lighting inconsistencies, speech pattern anomalies.

These tools flag suspicious content the moment it appears: not three days later when your comms director sees it trending.

Rapid Response Protocol: The 222 Rule

Taiwan's government operates on a "222 policy" for disinformation response:

- 200 words

- 2 photos

- 2 hours

Two hours from detection to public response. Not two days. Not "we're looking into it."

Your campaign needs the same speed. Pre-approved messaging templates. Pre-cleared spokespeople. Pre-established distribution channels.

When a deepfake drops, you're not scrambling. You're executing a playbook.

Pre-Bunking Infrastructure: Inoculation Strategy

Don't wait for attacks to happen. Predict them.

Identify the narratives your opposition might exploit. Then distribute accurate information through those same channels before the deepfakes appear.

Education materials. Voter outreach. Transparency about your candidate's actual positions.

You're vaccinating your audience against misinformation.

Legal Compliance Architecture

The regulatory landscape is shifting fast. The EU's AI Act requires synthetic media labeling. The U.S. has proposed the DEEPFAKES Accountability Act and the Protect Elections from Deceptive AI Act.

Your campaign needs to know:

- What AI-generated content requires disclosure

- Which platforms have specific deepfake policies

- How to document and preserve evidence for potential legal action

- What constitutes actionable defamation versus protected speech

This isn't optional. It's infrastructure.

Why Campaigns Choose XStream

Here's what separates winning campaigns from cautionary tales in 2026:

Technical sophistication meets political savvy.

At XStream, we don't just understand AI detection algorithms. We understand campaign cycles. Voter psychology. Crisis communications. Media narratives.

We've built AI-first strategies for organizations that needed to protect their reputation, maintain message discipline, and dominate digital spaces: often simultaneously.

Our approach?

We integrate detection technology with rapid response infrastructure. We train your team on pre-bunking strategies. We develop content that's authentic, compliant, and impossible to weaponize.

We create a digital defense perimeter around your campaign.

That means:

- Real-time monitoring dashboards that flag suspicious content across platforms

- Pre-built response protocols customized to your candidate and messaging

- Voter education campaigns that inoculate against common disinformation tactics

- High-quality video production that establishes visual authenticity (making deepfakes easier to spot)

- Legal documentation systems that preserve evidence and ensure compliance

But here's the part most agencies miss:

Defense alone isn't enough.

From Defense to Dominance

An AI-first strategy doesn't just protect you from deepfakes.

It positions you to dominate the digital battlefield.

While your opponents are scrambling to debunk false narratives, you're flooding the zone with authentic, high-engagement content. Professional livestreams. Compelling short-form videos. Data-driven ad targeting that reaches persuadable voters with surgical precision.

You're not playing defense.

You're playing chess while they're playing checkers.

The campaigns that win in 2026 won't be the ones with the biggest war chests.

They'll be the ones with the smartest tech infrastructure. The fastest response times. The most authentic digital presence.

They'll be the ones who understood that AI isn't a threat to navigate.

It's a strategic advantage to weaponize.

The Choice Is Simple

You can approach 2026 like it's 2020.

Hope your candidate doesn't become a deepfake target. React when crises hit. Rely on traditional media to set the record straight.

Or you can build a campaign that's ready for the reality of modern political warfare.

Detection systems that never sleep. Response protocols that execute in hours, not days. Content strategies that establish authenticity before attacks even begin.

The deepfakes are coming.

The foreign interference is happening.

The disinformation campaigns are already being planned.

The question isn't whether your campaign will face these threats.

It's whether you'll be ready when they arrive.

Ready to build an AI-first defense strategy for your 2026 campaign? Contact XStream and let's talk about protecting your candidate, your message, and your chance to win.